In today’l lab, we will be making a basic LLM-based chatbot with LangChain and LangGraph. We will try a few different settings and see how they affect the behavior of the chatbot.

Our plan for today:

Prerequisites¶

To start with the tutorial, complete the steps Prerequisites, Environment Setup, and Getting API Key from the LLM Inference Guide.

You need to install the following packages:

!pip install langchain_nvidia_ai_endpoints python-dotenv langgraph1. Recap: Messages and Chat Models 💬¶

ChatModels provide a simple and intuitive interface for you to make inference to LLMs from different providers. It accepts a sequence of messages and returns you the generation from the LLM. Different types of messages help control the behavior of the model in multi-turn settings.

There are 3 basic message types:

SystemMessage: sets LLM role and describes the desired behaviorHumanMessage: user inputAIMessage: model output

from langchain_core.messages import SystemMessage, HumanMessage

from langchain_nvidia_ai_endpoints import ChatNVIDIA

from langchain_core.rate_limiters import InMemoryRateLimiter# read system variables

import os

import dotenv

dotenv.load_dotenv() # that loads the .env file variables into os.environTruemessages = [

SystemMessage(

content="You are a helpful and honest assistant." # role

),

HumanMessage(

content="What is the distance between the Earth and the Moon?" # user request

)

]# choose any model, catalogue is available under https://build.nvidia.com/models

MODEL_NAME = "meta/llama-3.3-70b-instruct"

# this rate limiter will ensure we do not exceed the rate limit

# of 40 RPM given by NVIDIA

rate_limiter = InMemoryRateLimiter(

requests_per_second=35 / 60, # 35 requests per minute to be sure

check_every_n_seconds=0.1, # wake up every 100 ms to check whether allowed to make a request,

max_bucket_size=7, # controls the maximum burst size

)

llm = ChatNVIDIA(

model=MODEL_NAME,

api_key=os.getenv("NVIDIA_API_KEY"),

temperature=0, # ensure reproducibility,

rate_limiter=rate_limiter # bind the rate limiter

)llm.invoke(messages).content"The average distance between the Earth and the Moon is approximately 384,400 kilometers (238,900 miles). This distance is constantly changing due to the elliptical shape of the Moon's orbit around the Earth. At its closest point (called perigee), the distance is about 356,400 kilometers (221,500 miles), and at its farthest point (apogee), the distance is about 405,500 kilometers (252,000 miles)."2. Basic Chatbot 🤖¶

Almost there! We already have an LLM to interact with the user, now we should wrap it into some kind of interface.

For the sake of simplicity, we will now limit ourselves to the most basic while loop until the user says "quit".

def respond(user_query):

messages = [

SystemMessage(

content="You are a helpful and honest assistant." # role

),

HumanMessage(

content=user_query # user request

)

]

response = llm.invoke(messages)

return response.contentdef run_chatbot():

while True:

user_query = input("Your message: ")

print(f"You: {user_query}")

if user_query.lower() == "quit":

print("Chatbot: Bye!")

break

response = respond(user_query)

print(f"Chatbot: {response}")run_chatbot()

# Hi

# What is the 3rd planet from the sun?

# And 4th?You: hi

Chatbot: Hello. It's nice to meet you. Is there something I can help you with or would you like to chat?

You: what is the 3rd planet from the sun?

Chatbot: The 3rd planet from the Sun is Earth.

You: and 4th?

Chatbot: This conversation has just begun. I'm happy to chat with you, but I don't have any context about what you're referring to with "and 4th." Could you please provide more information or clarify your question? I'll do my best to help.

You: quit

Chatbot: Bye!

As you can see, the chatbot has an access only to the last message you’re passing to it, so you cannot have an actual coherent conversation. An easy workaround would be to pass the entire message history to the chatbot so it is aware of the previous messages. Here’s when the distinction between the HumanMessage and AIMessage is crucial: the LLM needs to know what was generated by whom.

Let’s adjust our function to keep track of the entire message history. Since we will be keeping the entire history to the chatbot, it makes sense to add the system message only once.

def respond(user_query, previous_messages):

human_message = HumanMessage(

content=user_query

)

previous_messages.append(human_message) # modify in place

response = llm.invoke(previous_messages) # history + user query

previous_messages.append(response) # modify in place

return response.contentdef run_chatbot():

system_message = SystemMessage(

content="You are a helpful and honest assistant." # role

)

messages = [system_message]

while True:

user_query = input("Your message: ")

print(f"You: {user_query}")

if user_query.lower() == "quit":

print("Chatbot: Bye!")

break

response = respond(user_query, messages)

print(f"Chatbot: {response}")run_chatbot()

# Hi

# What is the 3rd planet from the sun?

# And 4th?

# And what is its color?You: hi

Chatbot: Hello. It's nice to meet you. Is there something I can help you with or would you like to chat?

You: what is the 3rd planet from the Sun

Chatbot: The 3rd planet from the Sun is Earth.

You: and 4th?

Chatbot: The 4th planet from the Sun is Mars.

You: and what is its color?

Chatbot: Mars is often referred to as the "Red Planet" due to its reddish appearance, which is caused by iron oxide (or rust) in the planet's soil.

You: quit

Chatbot: Bye!

However, this solution is not scalable and robust: if you interact with the chatbot long enough, passing the whole message history becomes fairly (and unnecessary) expensive, the chatbot takes longer to respond, and the context window can be exceeded leading to errors. We will address that in Memory Enhancement, and for now we’ll keep going with the basic variant.

3. Switching to LangGraph 🕸️¶

LangGraph is a powerful framework for building LLM-based applications in a graph-based manner. It extends LangChain by introducing graph-based workflows where each node can represent an agent, a tool, or a decision point. With support for branching logic, memory, backtracking, and more, LangGraph makes it easier to manage complex interactions and long-running processes. It’s especially useful for developers creating LLM-based multi-agent systems that need to reason, plan, or collaborate (both with and without human interaction).

While we are not building a complex system yet, there are a few reasons to switch to LangGraph already:

Easier data transfer. LangGraph comes with a builtin mechanism for managing messages, properties, metadata etc. -- in one word, state of the system. For example, we will not have to add the messages to the history manually.

Persistence. LangGraph creates local snapshots of the system state, which allows it to pick up where it left off between the interactions.

Graph structure. We can already use the graph fashion LangGraph provides to easily manage the workflow. We won’t be using the stupid

whileloop anymore!Scalability and modularity. Even though our chatbot is basic yet, later we will expand it and build other complex pipelines, which LangGraph is just perfect for. Thus, if we build the chatbot with LangGraph now, we will be able to improve and scale it much much easier just connecting the necessary logic.

from typing import Annotated, List

from typing_extensions import TypedDict

from IPython.display import Image, display

from langchain_core.messages import BaseMessage

from langgraph.graph import StateGraph, START, END

from langgraph.graph.message import add_messagesThe first concept you should get familiar with is the state of the system. LangGraph builds pipelines as state machines, where at each given moment of the time, the system is at a certain node, has a certain state, and makes a transition based on the defined edges. As any state machine, a LangGraph pipeline has a start node, intermediate nodes, and an end node. When you pass the input to the system, it comes from the start node through the intermediate nodes to the end node, after which the system exits. At any transition, LangGraph transfers the state between the nodes. The state contains all the information you configured it to store: messages, properties etc. Each node receives the current state and returns the updated state. Thus, the system is always aware of what the current situation is.

A state is defined as a TypedDict with all the fields you want it to have (you can add extra fields later in workflow). If you add a function to the type declaration within the Annotated class, then instead of rewriting the state at each graph update, LangGraph will update it in correspondence with this function.

class State(TypedDict):

# `messages` is a list of messages of any kind. The `add_messages` function

# in the annotation defines how this state key should be updated

# (in this case, it appends messages to the list, rather than overwriting them)

messages: Annotated[List[BaseMessage], add_messages]

# Since we didn't define a function to update it, it will be rewritten at each transition

# with the value you provide

n_turns: int # just for demonstrationStateGraph is the frame of the system, it will bear all the nodes and transitions.

graph_builder = StateGraph(State)Now let’s define the nodes for our chatbot. In our case, we need three nodes:

The input receival node. It will prompt the user for the input and store it in the messages for further interaction with the LLM.

The router node. It performs the check whether the user wants to exit.

The chatbot node. It will receive the input if the user has not quit input, pass it to the LLM, and return the generation.

Each node is a Python function that (typically) accepts the single argument: the state. To update the state, the function should return a dict with the keys corresponding to the state keys, with the updated values. That is, if you for example need to update only a single property in the state while the rest should remain the same, you only need to return a dict with this specific key and leave the rest out. Also remember that the update behavior depends on how you defined your state class (will be rewritten by default or processed by a function if given in Annotated).

def input_node(state: State) -> dict:

user_query = input("Your message: ")

human_message = HumanMessage(content=user_query)

# add the input to the messages

return {

"messages": human_message # this will append the response to the messages

}After we defined the node, we can hang it onto our state. To do so, we need to bind it to the graph builder with an arbitrary name.

graph_builder.add_node("input", input_node)<langgraph.graph.state.StateGraph at 0x10e1341a0>def respond_node(state: State) -> dict:

messages = state["messages"] # will already contain the user query

n_turns = state["n_turns"]

response = llm.invoke(messages)

# add the response to the messages

return {

"messages": response, # this will append the response to the messages

"n_turns": n_turns + 1 # and this will rewrite the number of turns

}graph_builder.add_node("respond", respond_node)<langgraph.graph.state.StateGraph at 0x10e1341a0>Now decision nodes -- those responsible for branching -- work a bit differently. They also receive the state of the system, but instead of the updated state they return the destination -- meaning the node that should be executed next based on the logic implemented in this router node. The destination should be either a name we have given to a node (as "respond" in our case), or a LangGraph-predefined start or end state: START, END, respectively. Alternatively, you can return arbitrary values, but then you will have to map them to the actual destinations when defining the conditional edges.

The decision nodes are not added to the graph builder but are used for branching when defying edges (below).

def is_quitting_node(state: State) -> bool:

# check if the user wants to quit

user_message = state["messages"][-1].content

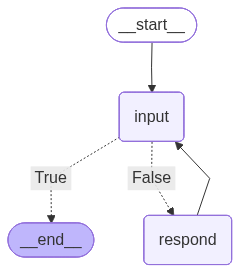

return END if user_message.lower() == "quit" else "respond"We now have all the building blocks for our chatbot. The only thing that is left is to assemble the system. For that, we should link the start node, the intermediate nodes, and the end node with edges.

There are two basic types of edges:

Direct edges. Just link two states unconditionally.

Conditional edges. Link the source edge to the destination based on a condition implemented in a decision node.

# that says: when you start, go straight to the "input" node to receive the first message

graph_builder.add_edge(START, "input") # equivalent to `graph_builder.set_entry_point("input")`

# that says: after you have received the first message, check if the user wants to quit

# and then go either to the "respond" node if you the function returns `False``

# or to the END node if the function returns `True`;

# depending on what the decision node returns;

# note that since it is a decision node, we didn't add it to the graph builder

# and we do not refer to it by its name and just pass it as a function

graph_builder.add_conditional_edges("input", is_quitting_node)#, {False: "respond", True: END})

# `is_quitting_node` will create edges to the possible destinations,

# so we don't have to specify those;

# finally, after the response, we go back to the "input" node

graph_builder.add_edge("respond", "input")

# since the decision node decides when to quit, we don't need to specify the end node<langgraph.graph.state.StateGraph at 0x10e1341a0>Finally, we can compile the graph and see how it looks like.

chatbot = graph_builder.compile()display(

Image(

chatbot.get_graph().draw_mermaid_png()

)

)

In a real-life development, you are more likely to want to make a class for the chatbot that will handle all the building at once. Here, we also add a convenient function to run the chatbot.

class Chatbot:

_graph_path = "./graph.png"

def __init__(self, llm):

self.llm = llm

self._build()

self._display_graph()

def _build(self):

# graph builder

self._graph_builder = StateGraph(State)

# add the nodes

self._graph_builder.add_node("input", self._input_node)

self._graph_builder.add_node("respond", self._respond_node)

# define edges

self._graph_builder.add_edge(START, "input")

self._graph_builder.add_conditional_edges("input", self._is_quitting_node, {False: "respond", True: END})

self._graph_builder.add_edge("respond", "input")

# compile the graph

self._compile()

def _compile(self):

self.chatbot = self._graph_builder.compile()

def _input_node(self, state: State) -> dict:

user_query = input("Your message: ")

human_message = HumanMessage(content=user_query)

# add the input to the messages

return {

"messages": human_message # this will append the input to the messages

}

def _respond_node(self, state: State) -> dict:

messages = state["messages"] # will already contain the user query

n_turns = state["n_turns"]

response = self.llm.invoke(messages)

# add the response to the messages

return {

"messages": response, # this will append the response to the messages

"n_turns": n_turns + 1 # and this will rewrite the number of turns

}

def _is_quitting_node(self, state: State) -> dict:

# check if the user wants to quit

user_message = state["messages"][-1].content

return user_message.lower() == "quit"

def _display_graph(self):

display(

Image(

self.chatbot.get_graph().draw_mermaid_png(

output_file_path=self._graph_path

)

)

)

# add the run method

def run(self):

input = {

"messages": [

SystemMessage(

content="You are a helpful and honest assistant." # role

)

],

"n_turns": 0

}

for event in self.chatbot.stream(input, stream_mode="values"): #stream_mode="updates"):

for key, value in event.items():

print(f"{key}:\t{value}")

print("\n")chatbot = Chatbot(llm)

Now we are ready to interact with the chatbot.

chatbot.run()

# Hi

# What is yor name?messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='ec262ad4-b86e-4dd7-a0c8-19a86b11d106')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='ec262ad4-b86e-4dd7-a0c8-19a86b11d106'), HumanMessage(content='hi', additional_kwargs={}, response_metadata={}, id='0e6445d5-31a2-45eb-baae-93a2f217e385')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='ec262ad4-b86e-4dd7-a0c8-19a86b11d106'), HumanMessage(content='hi', additional_kwargs={}, response_metadata={}, id='0e6445d5-31a2-45eb-baae-93a2f217e385'), AIMessage(content="It's nice to meet you. Is there something I can help you with or would you like to chat?", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "It's nice to meet you. Is there something I can help you with or would you like to chat?", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 44, 'total_tokens': 67, 'completion_tokens': 23, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--f32e4cb6-2681-47d9-8954-2e8ef98ad64d-0', usage_metadata={'input_tokens': 44, 'output_tokens': 23, 'total_tokens': 67}, role='assistant')]

n_turns: 1

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='ec262ad4-b86e-4dd7-a0c8-19a86b11d106'), HumanMessage(content='hi', additional_kwargs={}, response_metadata={}, id='0e6445d5-31a2-45eb-baae-93a2f217e385'), AIMessage(content="It's nice to meet you. Is there something I can help you with or would you like to chat?", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "It's nice to meet you. Is there something I can help you with or would you like to chat?", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 44, 'total_tokens': 67, 'completion_tokens': 23, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--f32e4cb6-2681-47d9-8954-2e8ef98ad64d-0', usage_metadata={'input_tokens': 44, 'output_tokens': 23, 'total_tokens': 67}, role='assistant'), HumanMessage(content='what your name', additional_kwargs={}, response_metadata={}, id='e500c53d-d878-43ec-a7cf-20e21ed39192')]

n_turns: 1

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='ec262ad4-b86e-4dd7-a0c8-19a86b11d106'), HumanMessage(content='hi', additional_kwargs={}, response_metadata={}, id='0e6445d5-31a2-45eb-baae-93a2f217e385'), AIMessage(content="It's nice to meet you. Is there something I can help you with or would you like to chat?", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "It's nice to meet you. Is there something I can help you with or would you like to chat?", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 44, 'total_tokens': 67, 'completion_tokens': 23, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--f32e4cb6-2681-47d9-8954-2e8ef98ad64d-0', usage_metadata={'input_tokens': 44, 'output_tokens': 23, 'total_tokens': 67}, role='assistant'), HumanMessage(content='what your name', additional_kwargs={}, response_metadata={}, id='e500c53d-d878-43ec-a7cf-20e21ed39192'), AIMessage(content='I don\'t have a personal name, but I\'m often referred to as a "Language Model" or a "Virtual Assistant." If you\'d like, I can suggest some names that people often use to refer to AI assistants like me, such as "Assistant," "AI," or "Bot." Alternatively, you can give me a nickname if you prefer! What do you think?', additional_kwargs={}, response_metadata={'role': 'assistant', 'content': 'I don\'t have a personal name, but I\'m often referred to as a "Language Model" or a "Virtual Assistant." If you\'d like, I can suggest some names that people often use to refer to AI assistants like me, such as "Assistant," "AI," or "Bot." Alternatively, you can give me a nickname if you prefer! What do you think?', 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 79, 'total_tokens': 157, 'completion_tokens': 78, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--9989d6db-2825-4980-b275-6d6695f987df-0', usage_metadata={'input_tokens': 79, 'output_tokens': 78, 'total_tokens': 157}, role='assistant')]

n_turns: 2

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='ec262ad4-b86e-4dd7-a0c8-19a86b11d106'), HumanMessage(content='hi', additional_kwargs={}, response_metadata={}, id='0e6445d5-31a2-45eb-baae-93a2f217e385'), AIMessage(content="It's nice to meet you. Is there something I can help you with or would you like to chat?", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "It's nice to meet you. Is there something I can help you with or would you like to chat?", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 44, 'total_tokens': 67, 'completion_tokens': 23, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--f32e4cb6-2681-47d9-8954-2e8ef98ad64d-0', usage_metadata={'input_tokens': 44, 'output_tokens': 23, 'total_tokens': 67}, role='assistant'), HumanMessage(content='what your name', additional_kwargs={}, response_metadata={}, id='e500c53d-d878-43ec-a7cf-20e21ed39192'), AIMessage(content='I don\'t have a personal name, but I\'m often referred to as a "Language Model" or a "Virtual Assistant." If you\'d like, I can suggest some names that people often use to refer to AI assistants like me, such as "Assistant," "AI," or "Bot." Alternatively, you can give me a nickname if you prefer! What do you think?', additional_kwargs={}, response_metadata={'role': 'assistant', 'content': 'I don\'t have a personal name, but I\'m often referred to as a "Language Model" or a "Virtual Assistant." If you\'d like, I can suggest some names that people often use to refer to AI assistants like me, such as "Assistant," "AI," or "Bot." Alternatively, you can give me a nickname if you prefer! What do you think?', 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 79, 'total_tokens': 157, 'completion_tokens': 78, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--9989d6db-2825-4980-b275-6d6695f987df-0', usage_metadata={'input_tokens': 79, 'output_tokens': 78, 'total_tokens': 157}, role='assistant'), HumanMessage(content='quit', additional_kwargs={}, response_metadata={}, id='d56084d1-862b-4247-9bec-ee217ec71e33')]

n_turns: 2

4. Checkpointing 📍¶

Even though our chatbot now conveniently stores and updates the state throughout one session, the final state is erased one the system exits. That does not allow for the repeated interaction with it. However, in real life, you want to be able to return to the chatbot in some time and be able to proceed where you left off.

To enable that, LangGraph provides a checkpointer for saving the memory. It creates a snapshot of the state locally stored under a unique id. All you need to do is to compile the graph with this memory and pass the id in the config when running the chatbot.

from langgraph.checkpoint.memory import MemorySaverclass ChatbotWithMemory(Chatbot):

def _compile(self):

self.chatbot = self._graph_builder.compile(checkpointer=MemorySaver())

def run(self, user_id):

input = {

"messages": [

SystemMessage(

content="You are a helpful and honest assistant."

)

],

"n_turns": 0,

"dummy_field": "some_value"

}

# add config

config = {"configurable": {"thread_id": user_id}}

for event in self.chatbot.stream(input, config, stream_mode="values"):

# change the output format

event["messages"][-1].pretty_print()

print("\n")chatbot_with_memory = ChatbotWithMemory(llm)

Now compare: first, we run the simple chatbot twice: it doesn’t remember the previous session.

# first run

chatbot.run()

# Hi my name is Max

# What is my name?messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='c3c2255b-be44-40f7-bad6-04c0d8102e78')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='c3c2255b-be44-40f7-bad6-04c0d8102e78'), HumanMessage(content='hi my name is Max', additional_kwargs={}, response_metadata={}, id='cacd4974-fb84-40e0-96d7-96f9640cec06')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='c3c2255b-be44-40f7-bad6-04c0d8102e78'), HumanMessage(content='hi my name is Max', additional_kwargs={}, response_metadata={}, id='cacd4974-fb84-40e0-96d7-96f9640cec06'), AIMessage(content="Hello Max! It's nice to meet you. Is there something I can help you with or would you like to chat?", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "Hello Max! It's nice to meet you. Is there something I can help you with or would you like to chat?", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 48, 'total_tokens': 74, 'completion_tokens': 26, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--f2429058-582e-44dd-9d9a-4e15323ea033-0', usage_metadata={'input_tokens': 48, 'output_tokens': 26, 'total_tokens': 74}, role='assistant')]

n_turns: 1

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='c3c2255b-be44-40f7-bad6-04c0d8102e78'), HumanMessage(content='hi my name is Max', additional_kwargs={}, response_metadata={}, id='cacd4974-fb84-40e0-96d7-96f9640cec06'), AIMessage(content="Hello Max! It's nice to meet you. Is there something I can help you with or would you like to chat?", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "Hello Max! It's nice to meet you. Is there something I can help you with or would you like to chat?", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 48, 'total_tokens': 74, 'completion_tokens': 26, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--f2429058-582e-44dd-9d9a-4e15323ea033-0', usage_metadata={'input_tokens': 48, 'output_tokens': 26, 'total_tokens': 74}, role='assistant'), HumanMessage(content='quit', additional_kwargs={}, response_metadata={}, id='bef24caa-9467-4ae4-ba35-9d4b16a6b2d1')]

n_turns: 1

# second run

chatbot.run()

# Hi my name is Max

# What is my name?messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='0038452c-5deb-41a8-97f5-c083eff5d8e5')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='0038452c-5deb-41a8-97f5-c083eff5d8e5'), HumanMessage(content='do you remember my name?', additional_kwargs={}, response_metadata={}, id='8c54d3f4-1fea-4712-a069-d99d465ebf5c')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='0038452c-5deb-41a8-97f5-c083eff5d8e5'), HumanMessage(content='do you remember my name?', additional_kwargs={}, response_metadata={}, id='8c54d3f4-1fea-4712-a069-d99d465ebf5c'), AIMessage(content="This is the beginning of our conversation, and I don't have any prior knowledge or memory of our previous conversations. I'm a text-based AI assistant, and I don't have the ability to retain information about individual users or recall previous conversations. Each time you interact with me, it's a new conversation. If you'd like to share your name, I'd be happy to address you by it during our conversation!", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "This is the beginning of our conversation, and I don't have any prior knowledge or memory of our previous conversations. I'm a text-based AI assistant, and I don't have the ability to retain information about individual users or recall previous conversations. Each time you interact with me, it's a new conversation. If you'd like to share your name, I'd be happy to address you by it during our conversation!", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 49, 'total_tokens': 134, 'completion_tokens': 85, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--900856e4-4005-443b-9716-6a9f1953913c-0', usage_metadata={'input_tokens': 49, 'output_tokens': 85, 'total_tokens': 134}, role='assistant')]

n_turns: 1

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='0038452c-5deb-41a8-97f5-c083eff5d8e5'), HumanMessage(content='do you remember my name?', additional_kwargs={}, response_metadata={}, id='8c54d3f4-1fea-4712-a069-d99d465ebf5c'), AIMessage(content="This is the beginning of our conversation, and I don't have any prior knowledge or memory of our previous conversations. I'm a text-based AI assistant, and I don't have the ability to retain information about individual users or recall previous conversations. Each time you interact with me, it's a new conversation. If you'd like to share your name, I'd be happy to address you by it during our conversation!", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "This is the beginning of our conversation, and I don't have any prior knowledge or memory of our previous conversations. I'm a text-based AI assistant, and I don't have the ability to retain information about individual users or recall previous conversations. Each time you interact with me, it's a new conversation. If you'd like to share your name, I'd be happy to address you by it during our conversation!", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 49, 'total_tokens': 134, 'completion_tokens': 85, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--900856e4-4005-443b-9716-6a9f1953913c-0', usage_metadata={'input_tokens': 49, 'output_tokens': 85, 'total_tokens': 134}, role='assistant'), HumanMessage(content='quit', additional_kwargs={}, response_metadata={}, id='880db546-c2c8-4461-8544-77b33477e1d1')]

n_turns: 1

The checkpointed chatbot will have the memories from the previous conversations.

# first run

chatbot_with_memory.run("user_1")

# Hi my name is Max================================ System Message ================================

You are a helpful and honest assistant.

================================ Human Message =================================

hi my name is Ma

================================== Ai Message ==================================

Hello Ma! It's nice to meet you. Is there something I can help you with or would you like to chat?

================================ Human Message =================================

quit

# second run

chatbot_with_memory.run("user_1")

# Do you remember my name?================================ System Message ================================

You are a helpful and honest assistant.

================================ Human Message =================================

do you remember my name

================================== Ai Message ==================================

Your name is Ma. It was nice chatting with you, even if it was brief! If you want to talk again sometime, feel free to come back and say hello. Bye for now!

================================ Human Message =================================

quit

Note that this works as long as you use the same id! That is how you can maintain different conversation history for different users.

# third run

chatbot_with_memory.run("user_2")

# Do you remember my name?================================ System Message ================================

You are a helpful and honest assistant.

================================ Human Message =================================

do you remember my name

================================== Ai Message ==================================

This is the beginning of our conversation, and I don't have any prior knowledge or memory of our previous conversations. I'm a large language model, I don't have the ability to retain information about individual users or recall previous conversations. Each time you interact with me, it's a new conversation. If you'd like to share your name, I'd be happy to address you by it during our conversation!

================================ Human Message =================================

quit

5. Memory Enhancement 💾¶

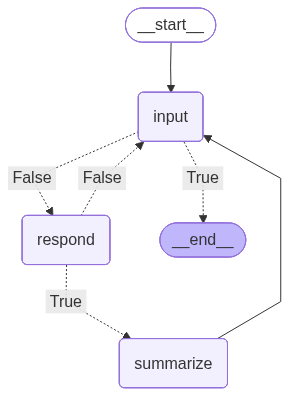

As discussed in Basic Chatbot, passing the whole history to the chatbot is extremely inefficient. A simple way to handle it would be to set a memory window, e.g. pass only the last 5 messages.

Additionally, we can make stepwise summaries of the previous conversation to make the interaction more efficient while maintaining the reference to the previous chat history. To do so, we need to create an additional node that would check if the messages have piled up already, and one that would create summaries with LLMs and replace the chat history parts with it.

from langchain_core.messages import RemoveMessage

from langchain_core.prompts.chat import ChatPromptTemplate, MessagesPlaceholder# prompt template that will return the predefined system message

# and the additional messages you provide to it

# this will be covered in detail at the next lab

summary_template = ChatPromptTemplate.from_messages(

[

# will always be returned

SystemMessage(

"Make me a summary of our conversation. Return only the summary in 1-2 sentences. "

"Formulate it as a user message with the instruction for the assistant to continue "

"the conversation with the summary."

),

# will be replaced by the messages you provide with the key "messages"

MessagesPlaceholder(variable_name="messages")

]

)class SummarizingChatbot(Chatbot):

_graph_path = "./summarizing_graph.png"

def __init__(self, llm):

super().__init__(llm)

self.summary_template = summary_template

def _build(self):

# graph builder

self._graph_builder = StateGraph(State)

# add the nodes

self._graph_builder.add_node("input", self._input_node)

self._graph_builder.add_node("respond", self._respond_node)

self._graph_builder.add_node("summarize", self._summarize_node)

# define edges

self._graph_builder.add_edge(START, "input")

self._graph_builder.add_conditional_edges("input", self._is_quitting_node, {False: "respond", True: END})

self._graph_builder.add_conditional_edges("respond", self._summary_needed_node, {True: "summarize", False: "input"})

self._graph_builder.add_edge("summarize", "input")

# compile the graph

self._compile()

def _summary_needed_node(self, state: State) -> bool:

return len(state["messages"]) >= 5 # system + 2 full turns

def _summarize_node(self, state: State):

# will pass the state to the prompt template;

# the prompt template will match the key "messages"

# with the messages in the state

# and will return a sequence of messages

# consisting of the summarization system message

# and the sequence of previous messages in the state

prompt = self.summary_template.invoke(state)

response = self.llm.invoke(prompt)

# now, mark all previous messages for deletion

messages = [RemoveMessage(id=m.id) for m in state["messages"][1:]] # don't remove the system message

# and add the summary instead

messages.append(HumanMessage(content=response.content))

return {

"messages": messages

}summarizing_chatbot = SummarizingChatbot(llm)

summarizing_chatbot.run()

# 3rd planet from Earth

# Capital of Germany

# What were we talking about now?messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='3rd planet from Earth', additional_kwargs={}, response_metadata={}, id='0f3752fd-bd48-4a2d-a7e5-743a26e61769')]

n_turns: 0

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='3rd planet from Earth', additional_kwargs={}, response_metadata={}, id='0f3752fd-bd48-4a2d-a7e5-743a26e61769'), AIMessage(content="The 3rd planet from the Sun is Earth itself. If we're looking for the 3rd planet from Earth, we need to consider the order of the planets in our solar system.\n\nThe order of the planets is: Mercury, Venus, Earth, Mars, Jupiter, Saturn, Uranus, and Neptune.\n\nSo, if we start counting from Earth:\n1. Earth (itself)\n2. Mars (the next closest planet)\n3. Jupiter (or sometimes Venus or Mars, depending on the positions of the planets in their orbits)\n\nHowever, since the positions of the planets are constantly changing due to their orbits, the 3rd planet from Earth can vary. But on average, the 3rd planet from Earth would be Jupiter or sometimes Venus, depending on their relative positions.", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "The 3rd planet from the Sun is Earth itself. If we're looking for the 3rd planet from Earth, we need to consider the order of the planets in our solar system.\n\nThe order of the planets is: Mercury, Venus, Earth, Mars, Jupiter, Saturn, Uranus, and Neptune.\n\nSo, if we start counting from Earth:\n1. Earth (itself)\n2. Mars (the next closest planet)\n3. Jupiter (or sometimes Venus or Mars, depending on the positions of the planets in their orbits)\n\nHowever, since the positions of the planets are constantly changing due to their orbits, the 3rd planet from Earth can vary. But on average, the 3rd planet from Earth would be Jupiter or sometimes Venus, depending on their relative positions.", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 48, 'total_tokens': 209, 'completion_tokens': 161, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--fd31f21b-6d79-4ce3-be00-65143322c0e3-0', usage_metadata={'input_tokens': 48, 'output_tokens': 161, 'total_tokens': 209}, role='assistant')]

n_turns: 1

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='3rd planet from Earth', additional_kwargs={}, response_metadata={}, id='0f3752fd-bd48-4a2d-a7e5-743a26e61769'), AIMessage(content="The 3rd planet from the Sun is Earth itself. If we're looking for the 3rd planet from Earth, we need to consider the order of the planets in our solar system.\n\nThe order of the planets is: Mercury, Venus, Earth, Mars, Jupiter, Saturn, Uranus, and Neptune.\n\nSo, if we start counting from Earth:\n1. Earth (itself)\n2. Mars (the next closest planet)\n3. Jupiter (or sometimes Venus or Mars, depending on the positions of the planets in their orbits)\n\nHowever, since the positions of the planets are constantly changing due to their orbits, the 3rd planet from Earth can vary. But on average, the 3rd planet from Earth would be Jupiter or sometimes Venus, depending on their relative positions.", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "The 3rd planet from the Sun is Earth itself. If we're looking for the 3rd planet from Earth, we need to consider the order of the planets in our solar system.\n\nThe order of the planets is: Mercury, Venus, Earth, Mars, Jupiter, Saturn, Uranus, and Neptune.\n\nSo, if we start counting from Earth:\n1. Earth (itself)\n2. Mars (the next closest planet)\n3. Jupiter (or sometimes Venus or Mars, depending on the positions of the planets in their orbits)\n\nHowever, since the positions of the planets are constantly changing due to their orbits, the 3rd planet from Earth can vary. But on average, the 3rd planet from Earth would be Jupiter or sometimes Venus, depending on their relative positions.", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 48, 'total_tokens': 209, 'completion_tokens': 161, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--fd31f21b-6d79-4ce3-be00-65143322c0e3-0', usage_metadata={'input_tokens': 48, 'output_tokens': 161, 'total_tokens': 209}, role='assistant'), HumanMessage(content='capital of Germany', additional_kwargs={}, response_metadata={}, id='0edb43c5-3043-4793-9299-8bce97a82b0b')]

n_turns: 1

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='3rd planet from Earth', additional_kwargs={}, response_metadata={}, id='0f3752fd-bd48-4a2d-a7e5-743a26e61769'), AIMessage(content="The 3rd planet from the Sun is Earth itself. If we're looking for the 3rd planet from Earth, we need to consider the order of the planets in our solar system.\n\nThe order of the planets is: Mercury, Venus, Earth, Mars, Jupiter, Saturn, Uranus, and Neptune.\n\nSo, if we start counting from Earth:\n1. Earth (itself)\n2. Mars (the next closest planet)\n3. Jupiter (or sometimes Venus or Mars, depending on the positions of the planets in their orbits)\n\nHowever, since the positions of the planets are constantly changing due to their orbits, the 3rd planet from Earth can vary. But on average, the 3rd planet from Earth would be Jupiter or sometimes Venus, depending on their relative positions.", additional_kwargs={}, response_metadata={'role': 'assistant', 'content': "The 3rd planet from the Sun is Earth itself. If we're looking for the 3rd planet from Earth, we need to consider the order of the planets in our solar system.\n\nThe order of the planets is: Mercury, Venus, Earth, Mars, Jupiter, Saturn, Uranus, and Neptune.\n\nSo, if we start counting from Earth:\n1. Earth (itself)\n2. Mars (the next closest planet)\n3. Jupiter (or sometimes Venus or Mars, depending on the positions of the planets in their orbits)\n\nHowever, since the positions of the planets are constantly changing due to their orbits, the 3rd planet from Earth can vary. But on average, the 3rd planet from Earth would be Jupiter or sometimes Venus, depending on their relative positions.", 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 48, 'total_tokens': 209, 'completion_tokens': 161, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--fd31f21b-6d79-4ce3-be00-65143322c0e3-0', usage_metadata={'input_tokens': 48, 'output_tokens': 161, 'total_tokens': 209}, role='assistant'), HumanMessage(content='capital of Germany', additional_kwargs={}, response_metadata={}, id='0edb43c5-3043-4793-9299-8bce97a82b0b'), AIMessage(content='The capital of Germany is Berlin.', additional_kwargs={}, response_metadata={'role': 'assistant', 'content': 'The capital of Germany is Berlin.', 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 221, 'total_tokens': 229, 'completion_tokens': 8, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--9a5d68a2-dc1b-472f-a17f-b86c6c8da097-0', usage_metadata={'input_tokens': 221, 'output_tokens': 8, 'total_tokens': 229}, role='assistant')]

n_turns: 2

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='Please continue the conversation with the summary: We discussed the 3rd planet from Earth and the capital of Germany, with the 3rd planet being variable but often Jupiter and the capital being Berlin.', additional_kwargs={}, response_metadata={}, id='e65ef1fc-09c4-4b24-965b-e6f152fd9a7f')]

n_turns: 2

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='Please continue the conversation with the summary: We discussed the 3rd planet from Earth and the capital of Germany, with the 3rd planet being variable but often Jupiter and the capital being Berlin.', additional_kwargs={}, response_metadata={}, id='e65ef1fc-09c4-4b24-965b-e6f152fd9a7f'), HumanMessage(content='what were we talking about now?', additional_kwargs={}, response_metadata={}, id='e719b80a-583b-4d97-821b-9ad7df37603e')]

n_turns: 2

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='Please continue the conversation with the summary: We discussed the 3rd planet from Earth and the capital of Germany, with the 3rd planet being variable but often Jupiter and the capital being Berlin.', additional_kwargs={}, response_metadata={}, id='e65ef1fc-09c4-4b24-965b-e6f152fd9a7f'), HumanMessage(content='what were we talking about now?', additional_kwargs={}, response_metadata={}, id='e719b80a-583b-4d97-821b-9ad7df37603e'), AIMessage(content='We were discussing two topics: the 3rd planet from the Sun (not from Earth, by the way) and the capital of Germany. The 3rd planet from the Sun is actually Earth itself. As for the 3rd planet from the Sun being variable, I think there might be some confusion - the order of the planets in our solar system is generally fixed. \n\nThe capital of Germany, on the other hand, is indeed Berlin. Would you like to know more about either of these topics or is there something else I can help you with?', additional_kwargs={}, response_metadata={'role': 'assistant', 'content': 'We were discussing two topics: the 3rd planet from the Sun (not from Earth, by the way) and the capital of Germany. The 3rd planet from the Sun is actually Earth itself. As for the 3rd planet from the Sun being variable, I think there might be some confusion - the order of the planets in our solar system is generally fixed. \n\nThe capital of Germany, on the other hand, is indeed Berlin. Would you like to know more about either of these topics or is there something else I can help you with?', 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 95, 'total_tokens': 209, 'completion_tokens': 114, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--6f0327ea-1346-4db8-ac97-f84e1bca5e1c-0', usage_metadata={'input_tokens': 95, 'output_tokens': 114, 'total_tokens': 209}, role='assistant')]

n_turns: 3

messages: [SystemMessage(content='You are a helpful and honest assistant.', additional_kwargs={}, response_metadata={}, id='d0d68f71-9843-4fc1-950b-86bfc7e7dbec'), HumanMessage(content='Please continue the conversation with the summary: We discussed the 3rd planet from Earth and the capital of Germany, with the 3rd planet being variable but often Jupiter and the capital being Berlin.', additional_kwargs={}, response_metadata={}, id='e65ef1fc-09c4-4b24-965b-e6f152fd9a7f'), HumanMessage(content='what were we talking about now?', additional_kwargs={}, response_metadata={}, id='e719b80a-583b-4d97-821b-9ad7df37603e'), AIMessage(content='We were discussing two topics: the 3rd planet from the Sun (not from Earth, by the way) and the capital of Germany. The 3rd planet from the Sun is actually Earth itself. As for the 3rd planet from the Sun being variable, I think there might be some confusion - the order of the planets in our solar system is generally fixed. \n\nThe capital of Germany, on the other hand, is indeed Berlin. Would you like to know more about either of these topics or is there something else I can help you with?', additional_kwargs={}, response_metadata={'role': 'assistant', 'content': 'We were discussing two topics: the 3rd planet from the Sun (not from Earth, by the way) and the capital of Germany. The 3rd planet from the Sun is actually Earth itself. As for the 3rd planet from the Sun being variable, I think there might be some confusion - the order of the planets in our solar system is generally fixed. \n\nThe capital of Germany, on the other hand, is indeed Berlin. Would you like to know more about either of these topics or is there something else I can help you with?', 'refusal': None, 'annotations': None, 'audio': None, 'function_call': None, 'tool_calls': [], 'reasoning_content': None, 'token_usage': {'prompt_tokens': 95, 'total_tokens': 209, 'completion_tokens': 114, 'prompt_tokens_details': None}, 'finish_reason': 'stop', 'model_name': 'meta/llama-3.3-70b-instruct'}, id='run--6f0327ea-1346-4db8-ac97-f84e1bca5e1c-0', usage_metadata={'input_tokens': 95, 'output_tokens': 114, 'total_tokens': 209}, role='assistant'), HumanMessage(content='quit', additional_kwargs={}, response_metadata={}, id='17af6697-4abb-4ed6-948e-ac403207fd6b')]

n_turns: 3